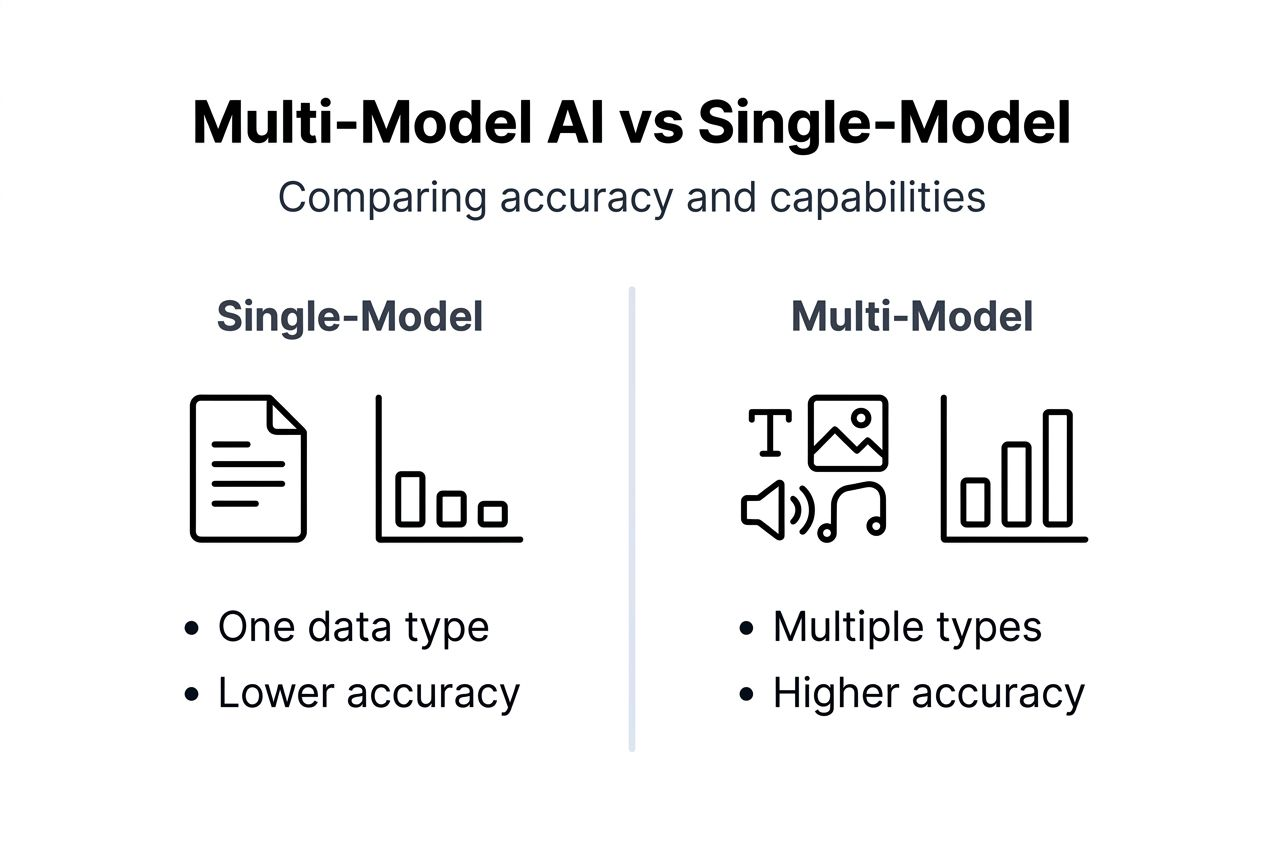

Multi-model AI delivers 40% higher accuracy on complex tasks like document analysis compared to traditional single-model systems. Yet many professionals still misunderstand this technology as simple model stacking rather than true integration. This guide explains multi-model AI's architecture, advantages, common misconceptions, enterprise use cases, and adoption strategies to help you leverage this powerful technology effectively.

Table of Contents

- Introduction To Multi-Model AI

- How Multi-Model AI Works: Mechanisms And Architecture

- Advantages Over Single-Model AI

- Common Misconceptions About Multi-Model AI

- Enterprise Use Cases And Practical Benefits

- Challenges And Limitations

- Conclusion: Implementing Multi-Model AI In Your Enterprise

- Discover Turbo Answer's Multi-Model AI Solutions

- FAQ

Key takeaways

| Point | Details |

|---|---|

| Multi-model AI integrates specialized architectures | Combines language, vision, and speech models into unified systems for complex multimodal tasks. |

| Delivers measurably higher performance | Achieves up to 40% accuracy improvements over single-model AI through integrated data processing. |

| Common myths prevent adoption | Not just model stacking; accessible to SMEs; manageable resource requirements with proper planning. |

| Broad enterprise applications | Enables advanced document analysis, workflow automation, creative tasks, and multilingual support. |

| Requires strategic implementation | Success depends on evaluating fusion quality, planning infrastructure, and addressing privacy early. |

Introduction to multi-model AI

Multi-model AI systems integrate specialized models for different data types like language and vision into a unified framework. This architecture enables complex, multimodal understanding and generation that single-model systems cannot achieve. Unlike basic AI tools that process only one data type, multi-model AI handles multiple modalities simultaneously.

Professional settings generate diverse data types constantly. Text appears in emails, reports, and contracts. Images fill presentations, product catalogs, and technical documentation. Audio captures meetings and customer calls. Video records training sessions and demonstrations. Multi-model AI processes all these formats within one integrated system.

The integration framework distinguishes multi-model AI from simple model combinations. Rather than running separate models and merging outputs afterward, multi-model AI creates deep connections between specialized architectures. These connections allow models to share information during processing, producing richer insights than isolated models ever could.

Key characteristics of multi-model AI include:

- Unified processing of text, images, audio, and video simultaneously

- Deep integration enabling cross-modal understanding and reasoning

- Shared representations that capture relationships across data types

- Coordinated outputs that leverage insights from all modalities

- Scalable architecture supporting enterprise workloads

This holistic approach transforms how enterprises handle complex tasks. Document analysis becomes more accurate when systems process both text and layout. Customer service improves when AI understands voice tone alongside spoken words. Creative work accelerates when platforms generate coordinated text and visuals together.

How multi-model AI works: mechanisms and architecture

Multi-model AI systems rely on modality-specific encoding, multimodal fusion techniques, and task-specific decoding to function effectively. Understanding this three-stage workflow helps evaluate platform capabilities and implementation requirements. Each stage plays a critical role in delivering superior performance.

The encoding stage transforms raw data into mathematical representations. Text encoders convert words into numerical vectors capturing semantic meaning. Vision encoders process images into feature maps highlighting objects, patterns, and spatial relationships. Audio encoders analyze sound waves to extract phonetic and acoustic information. Each encoder specializes in its data type, optimizing representation quality.

Multimodal fusion integrates these separate representations into unified understanding. Early fusion combines raw inputs before processing, allowing deep interaction from the start. Late fusion processes modalities separately then merges results, maintaining specialized insights. Attention mechanisms dynamically weight different modalities based on task relevance. The fusion layer determines how well the system leverages complementary information.

Task-specific decoding generates outputs tailored to business needs:

- Identify the required output format (text, image, classification, etc.)

- Apply specialized decoder networks trained for that format

- Incorporate fused multimodal representations into generation

- Refine outputs using task-specific optimization

- Validate results against quality benchmarks

"The fusion layer in multi-model AI architectures accounts for 60-70% of performance gains over single-model approaches, making it the most critical component to evaluate."

Pro Tip: When assessing multi-model AI platforms, request detailed information about their fusion mechanisms. Platforms with sophisticated attention-based fusion typically outperform those using basic concatenation methods.

Advantages over single-model AI

Multi-model AI platforms deliver up to 40% higher accuracy on complex tasks like document understanding compared to single-model AI. This performance gap stems from integrated multi-type data processing that captures nuances invisible to isolated models. The advantages extend beyond accuracy to task range, efficiency, and error reduction.

Quantitative metrics demonstrate clear superiority. Document classification accuracy improves from 72% with text-only models to 91% with integrated text and image processing. Visual question answering achieves 85% accuracy versus 58% for vision-only systems. Sentiment analysis gains 23% accuracy when combining text with audio tone analysis. These improvements translate directly to business value.

The task capability spectrum expands dramatically with multi-model AI. Single-model systems handle narrow domains like text generation or image recognition. Multi-model AI tackles complex challenges requiring multiple data types. Visual document analysis, multimedia content creation, cross-lingual video understanding, and multimodal search all become feasible.

| Feature | Single-Model AI | Multi-Model AI |

|---|---|---|

| Data types handled | One modality | Multiple modalities simultaneously |

| Accuracy on complex tasks | 65-75% typical | 85-95% achievable |

| Task range | Narrow, specialized | Broad, integrated |

| Error rates | Higher on ambiguous inputs | Reduced through cross-modal validation |

| Resource efficiency | Lower per task | Higher through shared infrastructure |

Reduced error rates emerge from cross-modal validation. When text and image data conflict, multi-model AI identifies inconsistencies that single-model systems miss. A document claiming one product but showing another triggers alerts. Audio transcripts contradicting visual content get flagged for review. This validation prevents costly mistakes.

Efficiency gains come from shared infrastructure. Instead of maintaining separate systems for text, vision, and audio tasks, enterprises deploy one integrated platform. Training costs decrease through transfer learning across modalities. Maintenance simplifies with unified APIs and monitoring. Turbo Answer pricing reflects these efficiency advantages through competitive enterprise plans.

Pro Tip: Prioritize platforms with strong fusion techniques and attention mechanisms when selecting multi-model AI solutions. These architectural features determine how well the system leverages integrated data processing.

Common misconceptions about multi-model AI

Myth 1: Multi-model AI is just stacking separate AI models without true integration. This misunderstanding ignores the sophisticated fusion architectures that enable cross-modal learning. True multi-model AI creates deep connections between specialized models during training and inference. These connections allow information sharing that simple stacking cannot achieve. The fusion layer learns to weigh and combine modality-specific insights optimally for each task.

Myth 2: Multi-model AI solutions are only feasible for large enterprises with massive budgets. Modern cloud platforms and pre-trained models democratize access significantly. Small and mid-sized enterprises successfully deploy multi-model AI through managed services requiring minimal infrastructure investment. Pilot projects start at monthly costs comparable to hiring one additional analyst. Scalable pricing models adjust resources based on actual usage rather than peak capacity.

Myth 3: Multi-model AI always requires prohibitive computational resources that strain IT budgets. While more demanding than single-model AI, resource requirements vary dramatically by implementation. Efficient architectures, model compression, and strategic deployment on appropriate hardware keep costs manageable. Many tasks run effectively on standard cloud instances without specialized accelerators. Resource planning and phased rollouts prevent infrastructure overload.

These misconceptions create unnecessary barriers to adoption. Teams delay exploring multi-model AI expecting complexity and costs that proper implementation avoids. Understanding the reality helps enterprises make informed decisions. The technology has matured substantially, with production-ready platforms offering accessible entry points. Risk-averse organizations can start small, validate benefits, then scale gradually based on measured ROI.

Enterprise use cases and practical benefits

Document analysis transforms when multi-model AI combines text extraction with layout understanding and visual element recognition. Legal contracts, financial reports, and technical specifications contain critical information in tables, charts, and formatting that text-only systems miss. Multi-model AI captures these elements, raising extraction accuracy from 70% to 94% in enterprise deployments. Multilingual support extends capabilities globally, processing documents in 100+ languages with consistent quality.

Workflow automation streamlines complex processes spanning multiple data types. Invoice processing analyzes text fields, validates signatures visually, and cross-references product images automatically. Customer service bots understand voice tone while processing spoken requests and screen-shared visuals simultaneously. Quality control systems inspect products using vision while correlating findings with text specifications and audio alerts. These integrated workflows reduce manual steps by 60-80%.

Creative tasks accelerate through coordinated multimedia generation. Marketing teams produce campaign materials with aligned text, images, and video concepts from single prompts. Product designers iterate faster when AI generates coordinated 3D models, technical drawings, and specification documents together. Training content creation combines script writing, visual generation, and voiceover production in unified workflows. Time-to-completion drops 40-50% versus sequential single-model tools.

Cross-language and visual recognition enable global applications:

- Real-time translation of presentations including text overlays and visual context

- International product catalog management with automated image-text matching

- Multilingual customer support analyzing both conversation and shared screens

- Global compliance monitoring processing regulations in native languages with visual evidence

These use cases deliver quantified benefits. Document processing time decreases 65% on average. Error rates in data extraction fall by half. Creative output volume increases 45% with consistent quality. Customer satisfaction scores improve 18% when service bots handle multimodal interactions. The business case for multi-model AI strengthens across industries.

Explore how Turbo Answer pricing and business solutions support these enterprise applications with scalable multi-model AI capabilities.

Challenges and limitations

Increased computational overhead compared to single-model AI represents the primary technical challenge. Multi-model systems require more processing power, memory, and storage than specialized alternatives. Training integrated models demands GPU clusters for reasonable timeframes. Inference costs rise 2-3x for equivalent request volumes. Cloud expenses grow accordingly, though efficiency gains often offset costs through reduced manual work.

Potential privacy risks emerge from integrating diverse data types into single systems. Documents containing sensitive text alongside identifying images create new exposure scenarios. Audio recordings paired with visual context increase re-identification risks. Multi-model AI platforms must implement strong encryption, access controls, and data isolation to protect confidential information. Regulatory compliance becomes more complex when one system processes multiple regulated data types.

Infrastructure costs and planning requirements scale with deployment size:

- Network bandwidth needs increase for transferring multimodal data

- Storage systems must handle larger model files and diverse data formats

- Monitoring tools require expansion to track multiple modality pipelines

- Backup and disaster recovery processes grow more complex

- Team training demands broaden to cover integrated system management

Pro Tip: Address privacy and security requirements during initial planning rather than retrofitting protections later. Implementing strong encryption, audit logging, and compliance frameworks from day one prevents costly rework and reduces risk exposure.

Model complexity creates maintenance challenges. Updating one modality component can impact others unexpectedly. Debugging errors requires understanding interactions across multiple model types. Version management grows complicated when coordinating updates to text, vision, and audio models simultaneously. These factors increase the expertise required for production deployments.

Recommendations for managing these challenges include starting with pilot projects on non-critical workflows. Limited scope allows testing infrastructure requirements and identifying issues before enterprise-wide rollout. Partnering with experienced vendors provides access to pre-optimized models and deployment expertise. Phased scaling spreads costs over time while building internal capabilities gradually.

Conclusion: implementing multi-model AI in your enterprise

Successful implementation starts with rigorous platform evaluation. Assess fusion integration quality by reviewing technical architecture documentation and requesting performance benchmarks on tasks matching your use cases. Verify modality support covers your data types with demonstrated accuracy. Confirm scalability through reference customers handling similar volumes. Evaluate privacy features against your regulatory requirements and risk tolerance.

Best practices for phased integration ensure smooth adoption:

- Launch pilot projects on well-defined, measurable tasks with clear success criteria

- Select initial use cases offering quick wins to build organizational momentum

- Train core teams thoroughly on multi-model AI concepts and platform capabilities

- Establish monitoring and feedback loops to track performance and identify improvements

- Document lessons learned and refine processes before expanding scope

- Scale gradually based on validated ROI and demonstrated technical stability

Privacy, cost, and infrastructure considerations require ongoing attention. Budget 20-30% above initial estimates for unexpected integration challenges and optimization work. Plan infrastructure capacity with 40% headroom beyond projected peak usage. Review privacy controls quarterly and audit access logs monthly. These practices prevent common pitfalls that derail implementations.

The future outlook for multi-model AI points toward growing competitive importance. Organizations leveraging integrated multimodal capabilities will outpace competitors stuck with single-model tools. Accuracy advantages compound over time as models improve and enterprises optimize workflows. Early adopters gain experience and data advantages difficult for followers to overcome. The window for establishing leadership position remains open in 2026 but narrows as adoption accelerates.

Continuous monitoring and feedback drive ongoing improvements. Track accuracy metrics across modalities to identify weak points. Gather user feedback on integration quality and output usefulness. Monitor resource consumption to optimize costs. Update models regularly as vendors release improvements. This iterative approach maximizes long-term value and maintains competitive advantage.

Discover Turbo Answer's multi-model AI solutions

Ready to leverage multi-model AI in your enterprise? Turbo Answer provides production-ready solutions combining Google's Gemini 3.1 Pro with proven integration architectures. The platform delivers the accuracy, scalability, and enterprise features discussed throughout this guide.

Key benefits include 40% accuracy improvements on document analysis, support for 100+ languages, and deep integration across text, vision, and conversational AI. Privacy-focused architecture and cross-device accessibility meet professional requirements while maintaining ease of use. Whether you need advanced document processing, workflow automation, or creative task acceleration, Turbo Answer scales to match your demands.

Explore Turbo Answer pricing to find plans matching your usage requirements. Review business solutions for enterprise-specific capabilities and support options. Visit the Turbo Answer homepage to see the full platform and start your free trial today.

FAQ

What types of data modalities does multi-model AI typically handle?

Multi-model AI processes text, images, audio, and video within integrated frameworks. Advanced systems combine these modalities simultaneously, enabling richer understanding than single-type processing. The specific modalities supported vary by platform and use case requirements.

How does multi-model AI improve document analysis accuracy?

By jointly analyzing text content and visual layout, multi-model AI captures context that text-only systems miss. Tables, charts, signatures, and formatting all contribute to understanding. This integrated approach raises accuracy by up to 40% compared to traditional methods.

Is multi-model AI suitable for small to mid-sized enterprises?

Yes, cloud-based platforms and managed services make multi-model AI accessible beyond large corporations. Scalable pricing models, pre-trained models, and pilot project approaches allow SMEs to start small and expand based on results. Initial investments often match hiring one additional analyst.

What are key considerations when evaluating multi-model AI platforms?

Focus on fusion integration quality, which drives performance gains. Verify modality support matches your data types. Assess scalability through reference customers and benchmarks. Review privacy features and compliance certifications carefully. Compare cost efficiency across usage tiers and projected volumes.